I wonder if we should be inviting Colm Massey from ISE (data specialist) and/or Karen Christiansen into this community/conversation? They have been doing some work on data mapping of coops …

at the risk of repetition, I’m thinking that it might be still a very good idea to consider the formats of the mapping data to be gathered, and ensure it is as portable / interoperable / open as we can make it, all before someone starts putting hours in to this good work?

It is motivating to produce something that can be reused by others in other co-operative ways, maybe ways that were not envisaged to begin with.

It feels like there are a couple of threads in here :

- what data and what format is useful for now and the future

- how much are people prepared to invest in open data commons

Openness and decentralisation have a cost (in my experience). For example,

do people value it enough to pay for it, either with some server, or with

installing a piece of software on their computer?

I’m all for it, but am technical. I think whatever solution is proposed

would be better if it doesn’t need more passwords + logins, and can be

close to other useful tools / flows.

Hi @Sion. I’m the person at ISE who has been doing most of our work on data and mapping, so no need to invite Colm on those grounds. But it would be good to see him here anyway! I’ll suggest to him that he joins.

Contracts are definitely good candidates for data. It brings up a basic question for me:

- How do we know that ‘TableFlip’ here is the same as ‘tableflip’ that appears in some other dataset, which may well have been developed independently of this contract data?

I realise that your example was given as a sketch; I am taking this as an opportunity to mention something that I feel is important and fundamental. I make no apologies for using this as an opportunity to say more about Linked Data (you may notice a pattern in my posts!)

If we were modelling this using Linked Data, then instead of the string ‘TableFlip’, we’d have something like

https://data.cotech.coop/id/members/TableFlip

This is a URI (Uniform Resource Identifier). It is globally unique. Although it looks like a URL, it is just a string that identifies the thing ‘TableFlip’. CoTech has the right to ‘mint’ URIs in its domain. If it is a well-behaved URI, then it will also be dereferenceable - i.e you can get a useful response when you GET it over HTTP: a human-readable response if a web browser asks for HTML, and machine-readable response (e.g. JSON, and many other formats) if a computer program asks for machine-readable response.

By inserting this URI into our data, as opposed to the string ‘TableFlip’, programs that use this data can ‘follow the links’ to other information about TableFlip, which may also be presented as links … to yet more information. In this way, a Web of Data is created.

It is also possible that the best source of Linked Data about TableFlip actually is available through a different URI. For example

https://data.tableflip.coop/id/about-us

In that case, we’d have Linked Data that declares that both URIs refer to the same thing. Things can have more than one name (identifier).

Yes. A couple of threads, at least! I’ve suggested that a category for Data or Data & Mapping is created: Forum Categories - #4 by mattw . Although it may be that we have to create new threads before that will happen.

Yes. The question about investment is pretty crucial. In espousing the virtues of using Linked Data, I’m also aware that there’s a learning overhead, and more time is required to put something in place that is intended for general use, rather than for the use of a single application. I’m certainly right behind the idea of an open data commons, as a key infrastructure layer to support digital tools for the Solidarity Economy, and can invest some time to this end.

Hey @mattw, pretty sure we’re on the same page. I’d go further with the linked data - it should be the hash of a message, so that it’s a content-addressable location, not a URL ![]()

The system I’m running (scuttlebutt) for a bunch of my business already has this built it. Would love to nerd out about it if you’re keen sometime.

@asimong funnily enough years at the coalface of linked data have made me feel its better to collect and publish the data first and then worry about the format. The world has a hell of a lot of unpopulated schemas/ontologies and not enough instance data.

I like @mixmix’s idea of minimum viable data (though I think we might as well make the ‘500 NZD’ bit stuctured ![]() )

)

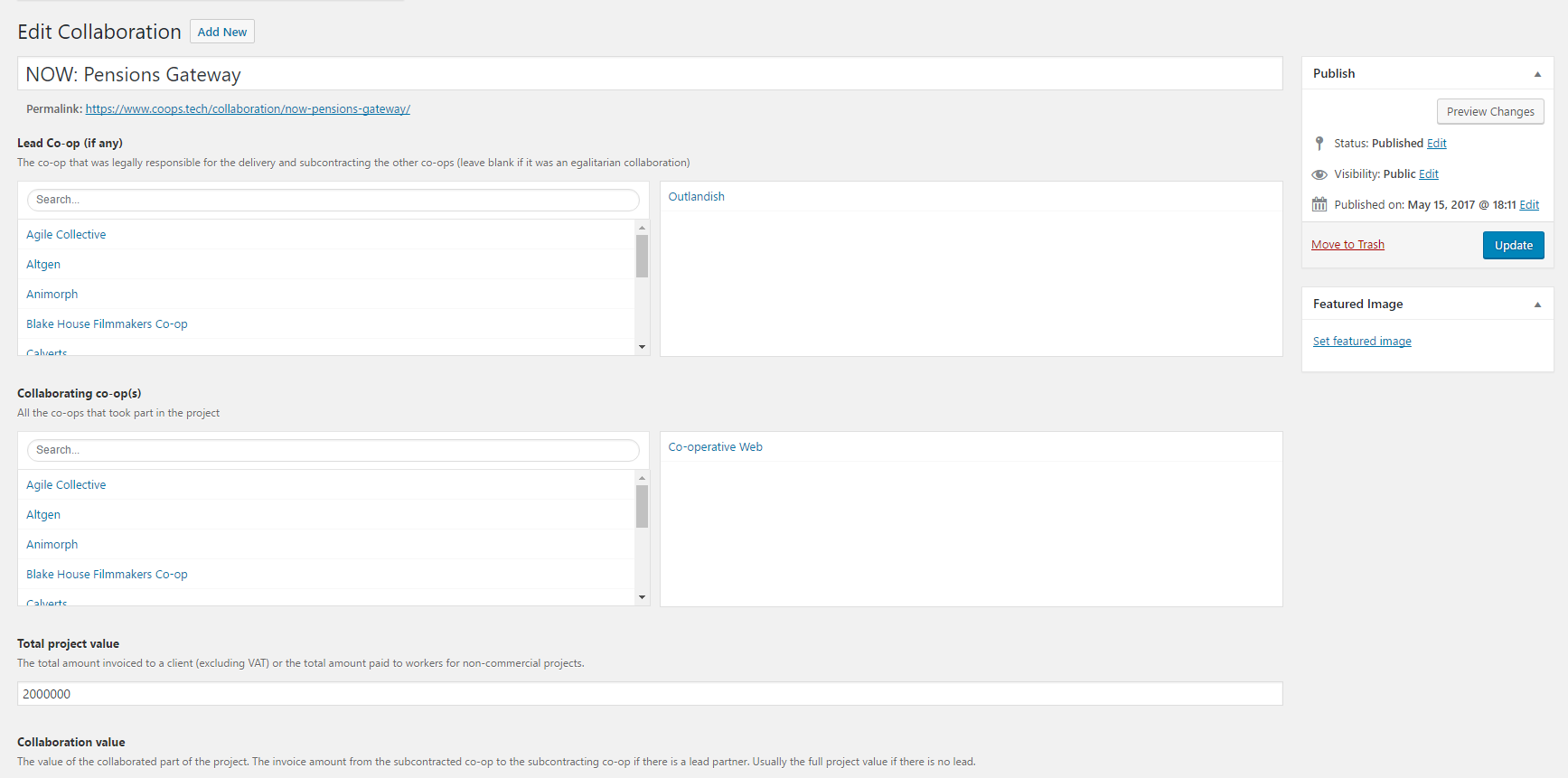

I’ve added Collaborations to the website (https://www.coops.tech/wp/wp-admin/post.php?post=2037&action=edit)

We’re just creating a pull request that will allow us to fetch the data out as JSON. It won’t be in JSON-LD but it will hold URIs for each co-op, etc. It would be fairly easy to add a JSON-LD endpoint if someone had a use case.

Harry @harry that’s great to hear that format is less important to people, one less thing to worry about… does that mean that, in your experience, the data is easy to transform from one format to another? I expect it is, if it follows any established linked data format, ultimately resolvable into triples / quads…

But thanks to your drawing attention to it, I think I didn’t express myself clearly. I’m absolutely not worried about matters like e.g. JSON-LD v. Turtle. So I’ll edit my post to replace the word “formats”, as I can see that means something I didn’t intend. In my (interoperability/portability) experience, what matters is the underlying – well, I can’t think of a better word than “ontology”, though that’s not everyone’s favourite word. (Goodness me, the Ontology Outreach Advisory is 10 years old already, where did that time go?)

OK, let’s get beneath the word “ontology”. I guess we share the recognition that having different data sets with similar, but different, and overlapping meanings can play havoc with interoperability or portability, and indeed the very linking of the data. Or don’t you think so?

What I mean is, can we agree on what our data represents, before trying to link it? That’s the essence of ontology, to me. If we are mapping co-ops and their relationships, it’s to say the least useful if we agree on what a co-op is, what properties and relationships they may have, etc. etc. Or, again, do you have a deeper view than this?

In my experience even very well resourced organizations such as BBC and New York Times fail to unlock the interoperable potential of datasets and end up wasting a lot of time on issues of second degree reification, etc.

I’d suggest that we focus on publishing the data simply in plain JSON, just as Co-ops UK did. Someone can then map this to linked data concepts as @mattw did.

That way the project to collect and publish the data doesn’t need to be held up by the question of which ontology to use.

Or challenge we’ll face is trying to get people to type the right amount in the box that says “total project value - the amount going on the invoice excluding VAT” etc. If we can’t get that right with a very high degree of confidence then the finer subtleties of our chosen ontology will be irrelevant

Harry @harry, I’m trying to find common ground and understanding here – could I ask what you mean by this?

How could they, in your view?

You mention

Could I ask for some clarification here? What might be alternatives, in what situation?

I hope this is OK for people to go over these basics, and that it helps others as well as me!

how could they in your view?

When I was at the BBC they spent millions of quid modelling the olympics as an ontology and publishing all the events, teams, venues, etc as linked data. It was all loaded into a triplestore and queried. The aim was to enable some sort of data sharing/new apps/new use cases but as far as I’m aware, nothing ever came of the investment.

a question of what ontology to use

I wouldn’t use an ontology as I’ve never seen the benefits. I’d use a standard schema with sensible labels e.g.

"total_project_value": 20000,

"lead_partner": "http://coops.tech/co-ops/outlandish",

"project_partners": [

"http://coops.tech/co-ops/open-data-services",

"http://coops.tech/co-ops/cetis"

]

}```

The whoever is doing the big data mashup can map our "total_project_value" to whatever makes sense in their context, but it's not our problem to sort it our for them.JUST START is the take-away. We can iterate IF it’s useful.

Worst case someone spends an afternoon hand-migrate some data - it’s not like we’re running a large hadron collider or anything ![]()

But on a serious note, I think we can all agree that the core standardisation we need to focus our energy on is whether we’re running ![]() or

or ![]() case formatting. (bikeshed troll)

case formatting. (bikeshed troll)

Looks like an awesome start @harry !

Hi everyone,

A really interesting topic. Sorry this is a bit of a ‘long stream of conciousness’ post.

I agree with mixmix’s idea about just starting. Migrating data between formats is not a big job. Having said that, I have found that working with Python and Mongo has been good in the past for its flexibility of data storage, simplicity for linking with APIs, available tools for analysing data, and outputting all kinds of formats.

My approach to these things is to think about what questions you’d like to be answered. Perhaps I’ll get the ball rolling by putting a few out there and briefly putting some potential answers. I find a good approach is to put all the ideas out there at first, don’t worry if they’re not very feasible, someone else might come up with a nearby idea which is feasible. However, in a later stage it’s then good to choose the best/most feasible ones and run with them. But please take these ideas as simply being thought-provoking and feel free to critique/add to them.

-

What are the aims an objectives of the data collection? What’s the data for, why do we want to collect it?

+ These are always difficult questions to answer, which is why big corporations like the BBC can spend silly money on projects without having a clear reason as to why they’re doing it. I think the questions are important though. Sometimes “because we can” is a good enough answer, but I think it’s worthwhile to at least give this question some thought.

+ For me, I’m interested in the idea of understanding co-ops as a kind of ecosystem. Different co-ops have different relationships with one another, there are different ‘species’ of co-op that work in different niches, etc. I am interested in computer/mathematically modelling this ecosystem in some way, and data can always inform models. The larger goal would be that by understanding how the ecosystem works, we can adapt better to it. -

What classes of data would we want to collect?

+ Online data - the online presences of the different co-ops.

+ Financial/trading data - how co-ops trade with other companies.

+ Geo-location data - where the co-ops have offices and outlets, etc.

+ Member/employee data

+ Temporal data - how these things have changed over time -

What accessible data are currently out there?

+ I guess all cooperatives have some kind of Internet presence. One idea might be to make a collection of their websites. You could glean geolocation data from the sites and look at how the different sites link to one another. I have some thoughts about adapting a sampling algorithm, and network analysis tools, which I have already written, to do this.

+ A second kind of Internet presence might be on social media. I’ve done some work mapping Twitter accounts in the past and finding out how they link into groups (Twitter users forming tribes with own language, tweet analysis shows | Twitter | The Guardian). I could look into doing something along these lines with Twitter, for which I have quite a few API tools - however it might be quite limiting as it is linked mainly to Twitter. However, I’m always up for moving to other APIs. However, perhaps co-ops are often a little under the social media radar, so the social media approach might not be so great?

+ I guess companies house would have some records?

+ It might be possible to send out a questionnaire to co-ops to glean some of these data?

+ What have others already done which might be useful?

Very interesting, @djembejohn. I really hope we can collaborate in the not too distant future - we (Institute for Solidarity Economics) plan to work on data for the Solidarity Economy during the rest of 2017, extending the work we have already done in this area, and building on work done by others.

Here is a ‘Charter for Building a Data Commons for a Free, Fair and Sustainable Future’ that I helped to write, with others (Silke Helfrich, people from TransforMap, and RIPESS Europe) earlier this year:

https://discourse.transformap.co/t/charter-for-building-a-data-commons-for-a-free-fair-and-sustainable-future/1368

I think it sounds like we’re all on the same page (or the same place on two different pages, as @mixmix points out).

Page 1: we all agree it would be good to start collecting data on CoTech collaborations, and agree that the WordPress thing I made is an ok starting point.

Page 2: we all agree that it would be cool if some stuff happened with open data, and that it might have some important long term implications for the economy and/or the commons.

Can I suggest we start moving towards some actions? Personally I’d be up for doing the following to move things forward:

- Getting someone to deploy the updated version of the site to enable the JSON API

- Adding all of Outlandish’s collaborations to date

- Making any suggested refinements to the ‘data model’ that would make it more useful/accurate/etc.

Any other volunteers?

I definitely agree that starting is a good idea, and that gives us a platform from which to explore/learn/iterate, etc.

I do have my eyes on some of the long term aspirations, and there are a few very small things that could be done early to smooth the way (off the top of my head, I can think of only one!)

I’d find it easier to collaborate if we were tracking suggestions, issues, code, examples, documentation, etc on something other than discourse (much as I love discourse). I suggest creating a repository on github, and I’m happy to create one if there’s agreement (among those who want to work on this). If we want to go down this route, we should probably start a new thread to make it more visible within CoTech.

and my axe ! ![]()

![]()

I’m up for:

-

making a simple site which makes it easy to view the data from the API

making a simple site which makes it easy to view the data from the API -

entering future collaborations in

entering future collaborations in

IMO anything that makes this data more alive gives this initiative a better chance.

Let me know when you’ve got an API I can consume up @harry

future dreaming (distraction) - I’ve friends who’ve worked in this domain a bit with things like holodex

The code is already in github I believe: GitHub - cotech/website: The Cooperative Technologists WordPress website - see CoTech WordPress - CoTech for details

At the moment there is a bit of a bottleneck in that only @chris can deploy to the test and production servers at the moment (I believe). I’d like to be able to deploy at least to the test server - I believe we may need to join webarchitects to get access to their infrastructure?